Hello!

This week in tech, enterprises are reshaping AI - switching from generic models to tools that understand users. Anthropic is advancing this with Claude Code Channels on Telegram and Discord, while OpenAI’s GPT-5.4 mini and nano target the subagent era. Meanwhile, the Vercel Open Source Program is preparing for Winter 2026.

The pattern is clear: specialization and user-centric design. For CTOs, this means prioritizing tools that improve UX and operations.

At Pagepro, we support these shifts with Next.js and Sanity migrations.

Grab your coffee, settle in, and enjoy Frictionless!

In the Queue

Deepen Your Expertise

Vercel’s Winter 2026 Open Source cohort includes projects like Answer Overflow and hot-updater, with some already serving over a million users each month. This shows how open-source tools are running at production scale rather than staying as side projects.

Reduce Friction

Software architects work between engineering and business, where decisions often come down to trade-offs like speed vs. scalability or short-term vs. long-term outcomes. Much of the role involves influencing teams without direct authority and keeping everyone aligned, while handling growing system complexity and areas like AI. Staying close to real code is still necessary - otherwise designs can break in production.

Technical interviews are changing as AI tools become harder to exclude, so teams are focusing more on how candidates use them instead of trying to ban them. The key difference is whether candidates can work with AI output critically rather than rely on it blindly. This puts more emphasis on judgment and problem-solving than writing code without assistance.

The spotlight effect makes engineers think others notice their actions more than they actually do. This often leads to hesitation when applying for roles, speaking up, or sharing ideas. In reality, most people are focused on their own work, so this perception can hold people back more than any real constraint.

AI Corner

Enterprises are moving away from generic AI tools and toward systems tailored to their users, data, and workflows, as off-the-shelf models often lack the context needed for reliable results. Examples like Zoom’s AI Companion show how features such as custom summaries can adapt to specific users and use cases. This shift shows that enterprise AI is less about raw model capability and more about how well it fits real workflows.

Anthropic introduced Claude Code Channels, a feature that lets developers interact with its coding agent through messaging apps like Telegram and Discord instead of staying in a terminal. This allows users to send instructions and receive updates while the agent runs tasks remotely. The release positions Claude Code as a more flexible alternative to tools like OpenClaw by making agent workflows easier to access and manage outside a local environment.

OpenAI’s GPT-5.4 mini and nano models are smaller, lower-cost versions that approach the performance of the full model, with mini scoring 54.38% on SWE-bench Pro. They are designed to handle tasks delegated by larger models, making it possible to run more work at lower cost. This setup uses different models for different tasks instead of relying on a single model.

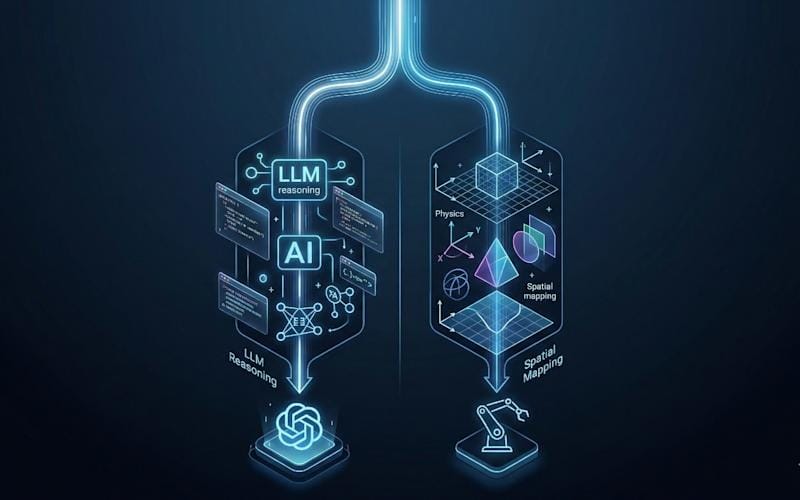

AI systems still struggle to understand how the physical world works, since large language models are trained on text rather than real-world interactions. The article outlines three approaches to address this: generative world models that simulate environments, predictive models like JEPA that learn patterns from observation, and hybrid systems that combine perception with planning. Together, these approaches aim to help AI predict outcomes, reason about physical changes, and act more reliably in real-world environments.

What's the biggest story from this week?

Just Cool

Attackers compromised the widely used Trivy vulnerability scanner by tampering with its release process, allowing malicious code to spread through trusted channels. Because Trivy is often used in CI/CD pipelines, the breach exposed sensitive data like tokens and secrets across many environments. The incident shows how a single compromised tool in the software supply chain can affect a large number of users.

A complete ML platform can be built locally by connecting tools that cover the full lifecycle, from experiment tracking to deployment and CI/CD. It shows how to handle common production issues like code drift, data inconsistencies, and model degradation while managing features, validating data, serving models, and monitoring performance. The result is a setup that turns experiments into a reliable workflow instead of something that breaks in production.

Let’s Stay in Touch! 📨

Do you have any comments about this newsletter issue or questions you want to ask? Drop me a message or book a meeting.